Zip files are a common file format for sharing collections of files or for compressing large files. I’m going to take a look at how JSL can be used to handle these files without first manually unzipping the file.

Zip files are a common file format for sharing collections of files or for compressing large files. I’m going to take a look at how JSL can be used to handle these files without first manually unzipping the file.

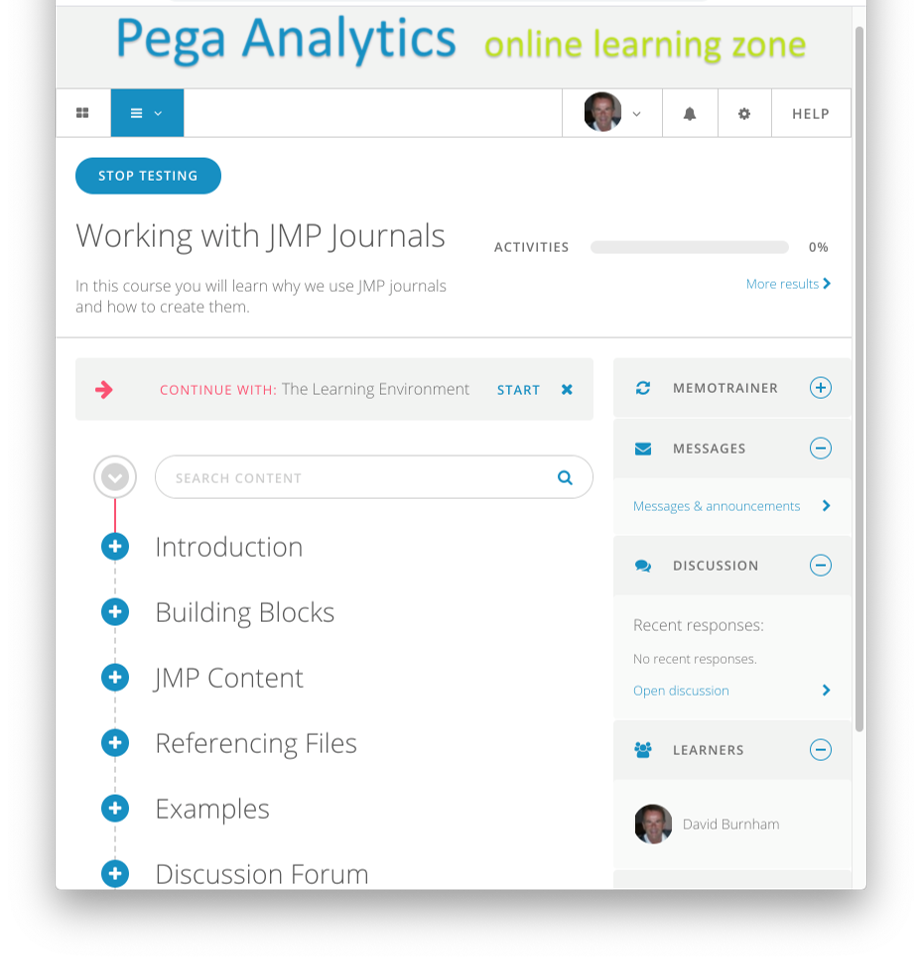

Online Learning Zone

Transparency

Here’s a handy little function to apply a transparency effect to a solid (r,g,b) colour:

|

1 2 3 4 5 6 7 8 |

TransparentRGB = function({r,g,b,opacity=0.65},{default local}, red = opacity*r + (1-opacity); green = opacity*g + (1-opacity); blue = opacity*b + (1-opacity); return(RGBColor(red,green,blue)); ); |

File Handling

The JMP scripting language has a number of convenient functions for handling files external to JMP. Here’s an example:

|

1 2 3 4 5 6 7 8 |

// purge the temporary folder files = filesIndirectory(tmpDir); for (i=1,i<=nItems(files),i++, path = convertFilePath(files[i],base(tmpDir)); if (!isDirectory(path), deleteFile(path) ) ); |

Code Folding

Code folding allows you to collapse a block of code – you can use it to focus on high-level structure without getting lost in the detail.

To enable code folding enable to option under the Script Editor section of Preferences, found under the File menu.

I use it with my user-defined functions to give me an overview of contents within an include file. Combined with appropriately placed comments this helps to summarise the contents of a library of functions. Here is an example:

Reverse Process Capability

Process capability is a well-established technique for evaluating the degree to which a process is capable of delivering a product within specification. But what if the specifications are unknown or at best tentative?

The calculations of process capability analysis can be reversed so that for a given set of target capability values the associated specification limits can be generated. The calculation is straight-forward for a normal distribution but needs a bit more thought when it comes to asymmetric distributions.

The Lognormal Distribution

The lognormal distribution is a commonly used distribution for modelling asymmetric data. It’s just the log of a Normal distribution right? Well no, it’s actually the other way around. You take the log of a lognormal distribution to arrive at a normal distribution. Is it just me, but I always have a bit of a mental block about this, it always feels a bit back to front.

In this post I will explore the relationship between a lognormal distribution and a normal distribution.

Calculating dppm

In my last post I introduced the idea of using the JSL script editor as a simple command line calculator; and prior to that I discussed how process capability indices (Cp,Cpk) are a convenient shorthand notation but suffer from lack of transparency. Today I will bring these two themes together by showing how I can use the JSL script editor to calculate defective parts per million (dppm) for a given set of capability indices Cp and Cpk.

The Script Editor as a Calculator

You don’t need to be a programmer to make productive use of the JSL script editor. The editor can be used by non-programmers as a simple command-line calculator that provides access to JMP’s library of mathematical and statistical functions.

Capability Indices and Process Shift

In this post I will derive a simple relationship between process shift and the capability indices Cp and Cpk.